In August, we finished initial work on a new OpenELIS feature: the Viewable Audit Trail.

Jan wrote about the Audit Trail, explaining the impetus and the first phase of development. In short, it came out of discussions with our colleagues in Cote d'Ivoire about ways to better troubleshoot problems, both with samples and the OpenELIS system itself.

As part of an on-going process to improve the OpenELIS user experience, I've been thinking about how to best implement some features that will make the system easier to use and give users faster, more intuitive ways to get their work done. So as part of rolling out the Audit Trail, I took the opportunity to use it as a test case for some new features.

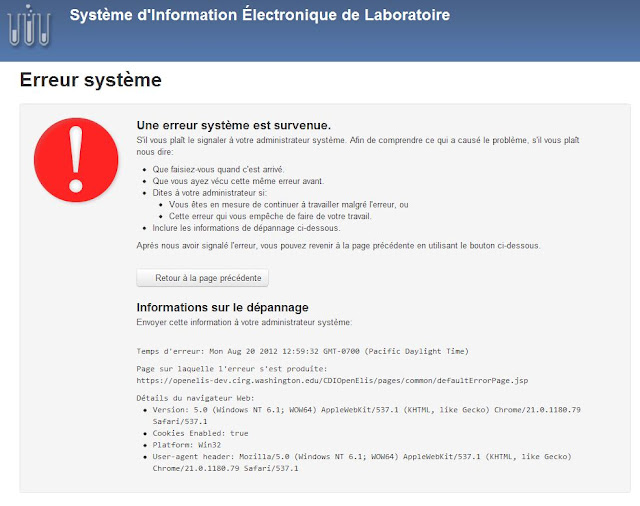

A picture can say a thousand words, so here's a quick comparison of the "old" and "new" versions:

|

| Old Audit Trail |

|

| New Audit Trail |

We've done a few things here:

- Added summary information at the top: the order/accession number, the date it was created and the current status.

- Just below that is a new row with tools for sorting through the audit trail. It shows the number of "entries" in the database and allows for filtering through the entries by searching of by selecting a category. Very useful for an order that may have hundreds of rows in the database.

- The table itself has a new, more modern look and can be sorted.

If a picture is worth a thousand words, surely a video is worth even more. Here's a short screencast that shows these improvements in action.

We know OpenELIS users have a lot to do. All the user experience (UX) improvements are intended to the user's goal (in this case, understanding where a problem may have occurred) easier, faster and, dare we say, more pleasurable experience. We hope that being able to parse through tables more quickly and getting better feedback from the system will lead to significant saving in time spent per order and a reduction in the error rates.

We're making all this happen by employing some new and powerful software frameworks. The new Audit Trail UI relies on:

- jQuery - an open-source Javascript library that has all sorts of tools for "client-side scripting". That simply means being able to do things on screen after the page is loaded. For example, changing the sorting order of a table.

- DataTables - an open-source jQuery plug-in that handles the interactions to the HTML table.

- Bootstrap - an open-source CSS framework from the good folks at Twitter. It's a flexible and efficient way to build UI elements in web apps that work across all major browsers.

The updated Audit Trail will be part of the next set of OpenELIS releases. And the UI will soon be applied to other sections of OpenELIS.

As always, please let us know if you have any thoughts or questions.

- Mark